Over 140,000 kids from around the world were tested to see which is most important for success: grit or growth mindset.

The answer? Neither of them.

The code here will allow you to reproduce the main findings in this post.

Debunking the myths

Google ‘education myths debunked’ and you’ll soon come across something about learning styles.

Each child had a preferred way of learning – visual, auditory or kinaesthetic – the idea went, and teachers had to accommodate this.

This was based on evidence.

Until it wasn’t.

This is how teaching works. Strategies are supposedly evidence based. But it’s funny how often the evidence is called into doubt just as the next fashionable theory arrives.

Whose test paper will you take a peek at?

A few years ago, ‘growth mindset’ was in fashion.

The original concept came from Stanford University professor Carol Dweck and colleagues.[1] If you have a growth mindset, you believe your intelligence and abilities are malleable. It isn’t just what you’re born with, it’s what you do to improve them that really matters.

The classic test of growth mindset went a little like this – a kid takes a test. After, they’re given the opportunity to someone else’s test. Do they choose the test of the student who did better, to learn from them, or the one who did worse, as an ego boost? First choice = growth mindset, second choice = fixed mindset.

Each school had posters up about how to foster it. In 2015, the education secretary Nicky Morgan wanted to ‘nurture a generation’ through promoting growth mindset.

The academic work on growth mindset was complex, but schools often stripped this nuance away. Inevitably, when the classroom interventions didn’t match the effects seen in the original studies, it was this translation that was blamed.

In 2019, a randomised controlled trial involving over 100 schools and over 5000 students found that students exposed to training in how to build a growth mindset made …. no extra progress compared to those without the training.

As with all fashions, growth mindset eventually faded from view. The posters became tattered and the school assemblies moved onto new topics.

Growth mindset never completely disappeared. It’s still mentioned from time to time. The fossilised remains are still there, on outdated teaching and learning pages on forgotten corners of school websites.

But there’s a new kid on the block.

The secret to West Point success – and everything else

By 2019, the year growth mindset’s lustre faded in England, the UK government was already talking about the importance of developing ‘character traits’ like conscientiousness and perseverance.

By 2025, this had become a central focus for the Department for Education. Developing ‘much-needed grit’, as the Education and Health Secretaries Bridget Phillipson and Wes Streeting put it, would help young people in all areas of life.

Grit, according to Phillipson, is ‘the resilience, the ability to cope with life’s ups and downs, about the challenges that are thrown at you’. In fact, it’s ‘essential for academic success’.

The concept – grit – was popularised by psychologist Angela Duckworth in her book of the same name.[2] She led much of the pioneering research in the area. And grit seemed to explain things no other concept could.

Take the elite West Point military training academy in the US. Out of 14,000 high school applicants – who are nearly all star athletes as well as top academic performers – only 1,200 are admitted. Of those, twenty percent drop out before graduation, including a substantial number in the first weeks.

Fitness didn’t predict who would leave; nor did academic achievement. Then Duckworth stumbled on the idea of grit. Suddenly, just by giving cadets a few questions, she could predict who would make it to graduation and who would leave a few weeks in.

And grit didn’t just explain who would graduate from West Point. It seemed to explain success in areas of life as diverse as who’d be crowned spelling bee champion to who would top a company’s sales figures.

But what exactly is grit?

Grit, for Duckworth, has two aspects – perseverance and consistency of interests. Perseverance obviously encapsulates how hard you are willing to work. Consistency of interests looks at whether you flit between hobbies and activities or stay focused year after year.

But interest is often forgotten, especially in schools. It doesn’t matter whether you’re interested in English or maths, you still need to work hard towards your exams.

Duckworth’s own work doesn’t help with this simplification. She sums up grit in two equations:

talent x effort = skill

skill x effort = achievement

Effort, then, is the most important aspect of achievement. Many schools can be forgiven for reducing grit to the idea that you need to work hard (even when you don’t feel like it) to succeed.

The question is, are they right?

Does persevering really make a difference?

So, which is best?

In an ideal world, we’d take a hundred thousand kids from across the world and give them a questionnaire. We’d ask them about grit and growth mindset.

We could then, for example, take the top 10% in each group – grit and growth mindset – and compare their scores in maths, reading and science.

Who did best, those top in growth mindset or grit?

Luckily, we don’t need to – someone’s already done it for us. The OECD’s PISA test is taken by hundreds of thousands of fifteen-year-olds around the world every few years. As well as assessing which country’s students do best in mathematics, reading and science, they look at ‘soft skills’ including growth mindset and perseverance.

Perseverance? What happened to grit?

First, as the Education Secretary suggests, while grit is a complex concept, most schools are only interested in the perseverance part. It’s about trying hard when things get tough.

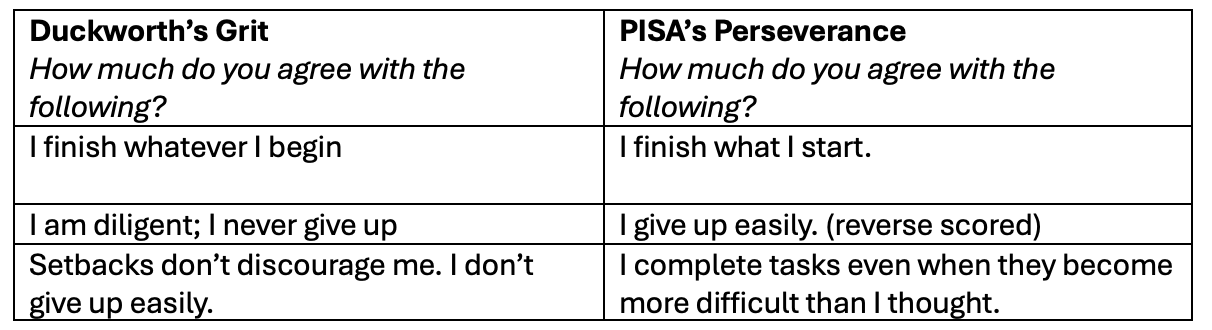

Also, PISA’s perseverance construct isn’t that far away from Duckworth’s original grit scale.

Take these two:

Although I should probably just say ‘perseverance’, ‘grit’ is a bit catchier. I’ll use the two interchangeably from now on, but bear in mind that Duckworth’s scale includes consistency of interests too, e.g. ‘My interests change from year to year’ (reverse scored, so ticking ‘agree’ gets you a low score).

The growth mindset scale is a cut-down version of Dweck’s original questions. The key question is this one: ‘Agree/disagree: Your intelligence is something about you that you cannot change very much.’

So, what happens when we put growth mindset and grit (perseverance) head-to-head? Which has the strongest effect on test scores?

But how WEIRD is our sample?

The analysis below is quick and simple. It’s an approximation. A thorough analysis would take many more stages and deal with a few issues a bit more rigorously than I have.

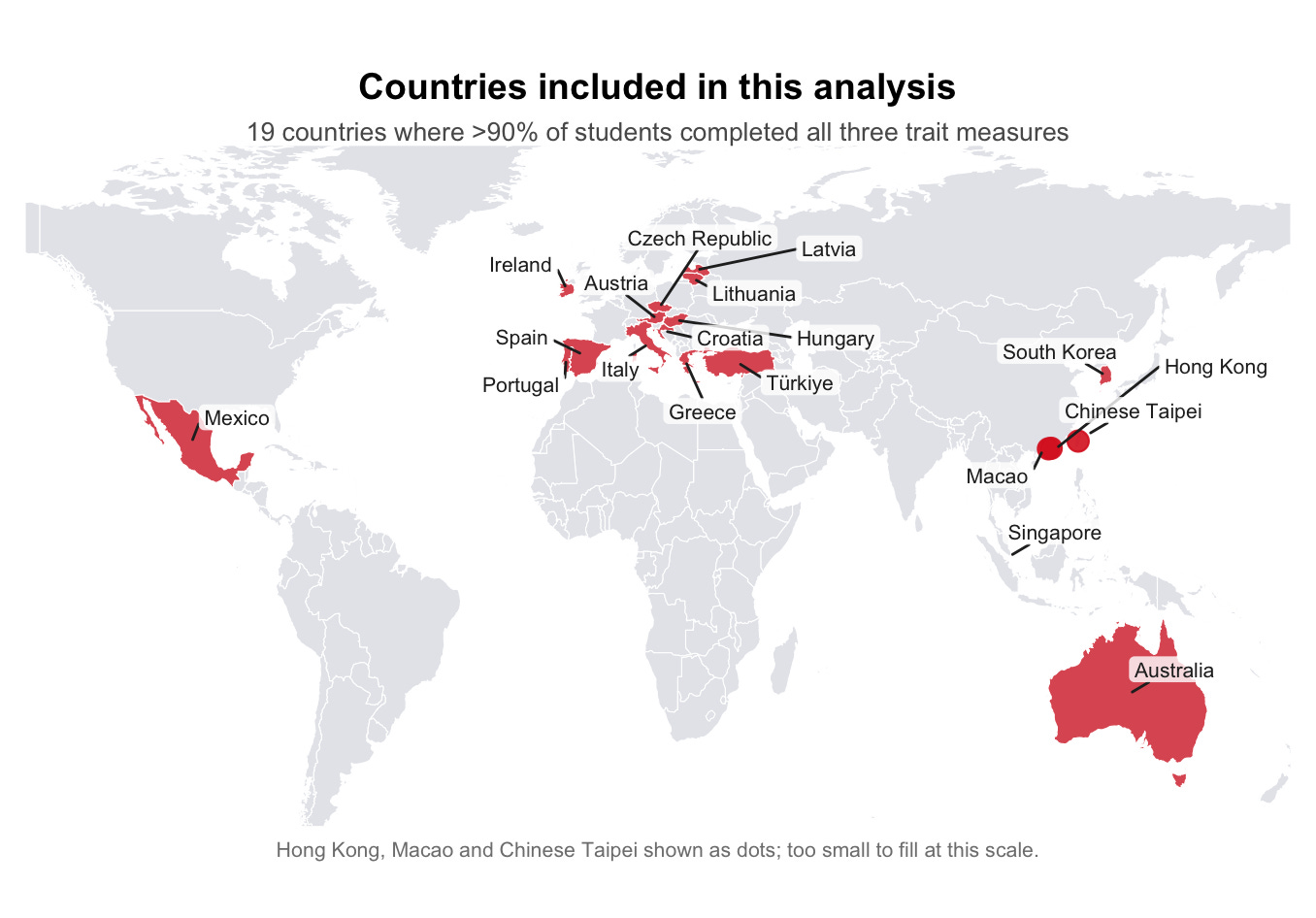

One of the main problems is that kids taking the PISA tests don’t answer all the questions, only a selection of them. If we look at just the countries where at least 90% of kids answered questions about growth mindset and perseverance, we’re left with only 19 countries out of the 80 that took part.

It doesn’t sound that much, but the sample still includes 145,000 students.

The main worry is whether these countries are really that representative, globally speaking:

In a 2010 paper, psychologist Joseph Henrich and colleagues warned that a lot of published research findings were skewed towards Western, Educated, Industrialized, Rich and Democratic (WEIRD) nations.

Did these findings hold in non-WEIRD nations? There wasn’t enough evidence to say. And the sample of 19 countries above isn’t immune from this criticism: most obviously, there are no African or South American nations included at all.

But the sample is more diverse than it might look at first glance: alongside Western European nations, the sample includes Türkiye, Mexico and several high-performing East Asian systems whose educational cultures differ substantially from Western norms.

Then there’s a second simplification (and complication).

PISA doesn’t give each student a single score for mathematics, reading and science; instead, it estimates a range of possible scores. A thorough analysis would take all of them into account.

I’m just going to use what’s called the first ‘plausible value’. So, bear in mind it’s an estimate, but it will get us close enough. Even published, peer-reviewed papers like this Nature article do this sometimes.[3]

Before we release the results, a word about what the scores actually mean.

Can you really make airplanes out of dogs?

Six birds flew over the trees

The window sang loudly

Airplanes are made of dogs

Do these sentences make sense?

If you can answer correctly, you’re on the way to a decent score on the reading fluency section.

There are trickier and more interesting comprehension questions – on a made-up blog about Rapa Nui, for example, and discovering whether you can give aspirin to chickens via a chat forum.

The OECD keeps a tight hold of most of the questions, though, which allows them to compare scores. If the UK scores 500 points in science this year, is this really an improvement over the 490 last time or is this grade inflation?

If everyone answers the same questions every four years, it’s easy to compare.

What’s a ‘good’ PISA score? In some ways, it depends how your country did last time. Beat the previous score and your nation’s Education Secretary will be on the phone to the papers to organise a press conference.

It also depends on how your rivals do. And, of course, the pace setters, like Singapore, Korea, Japan and their neighbours like Taipei (Taiwan), Macao (China) and Hong Kong. These top countries might be pushing 550.

The ‘average’ used to be 500. It’s slipped a bit since then to around the 470s. And this is obviously where you’ll find the mid-ranking countries like Israel, or Malta or the Slovak Republic. Countries nearer the bottom, like Kosovo or Cambodia, fall below 380.

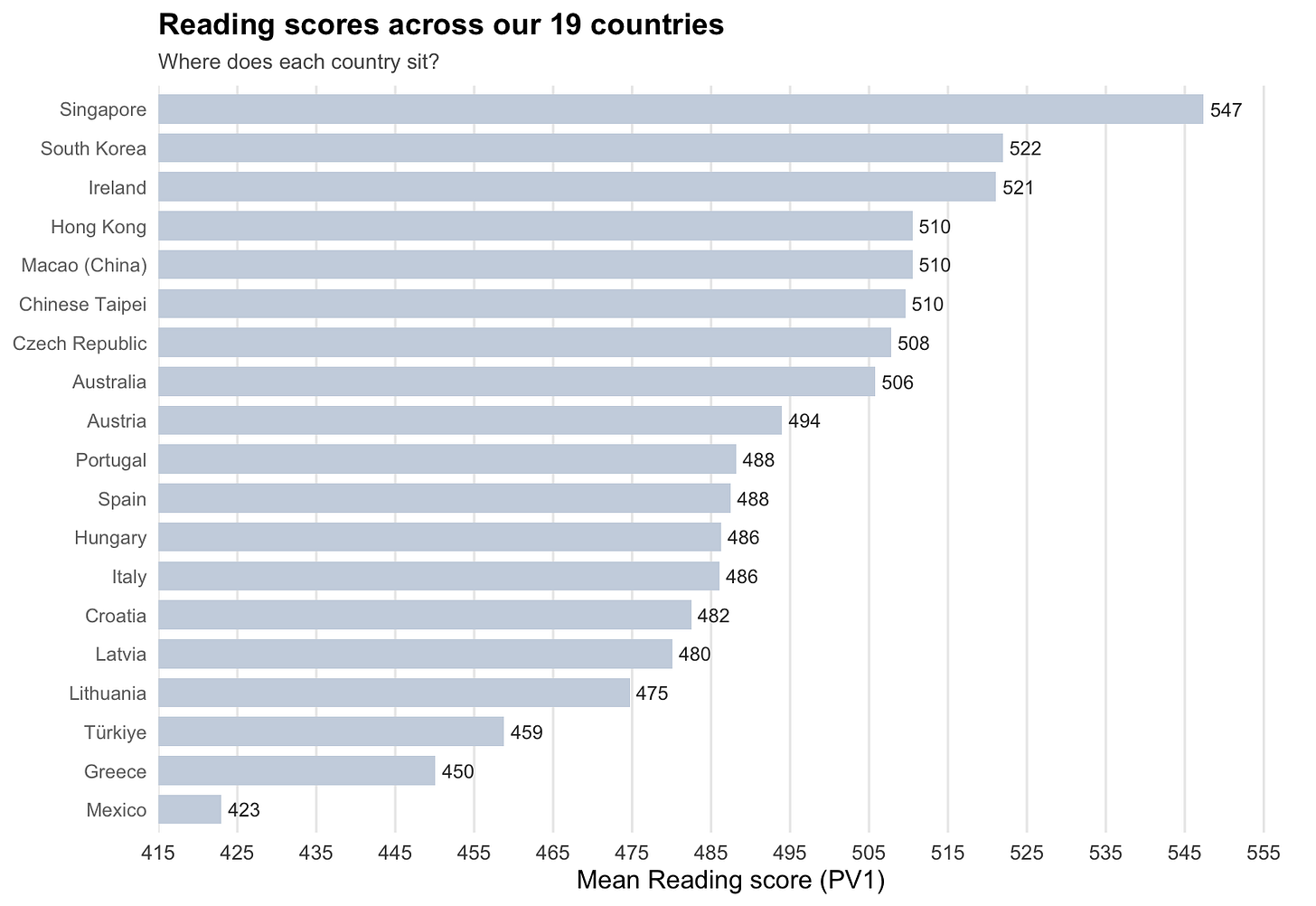

To give an idea of the spread of results, here are the reading scores for our 19 countries:

Enough preamble.

How do the gritty students compare to those with growth mindset?

And the winner is…

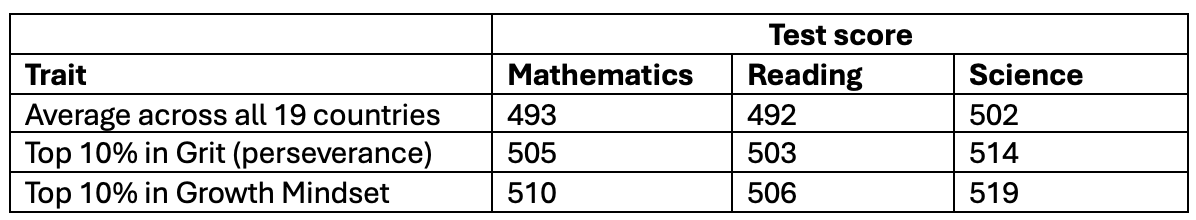

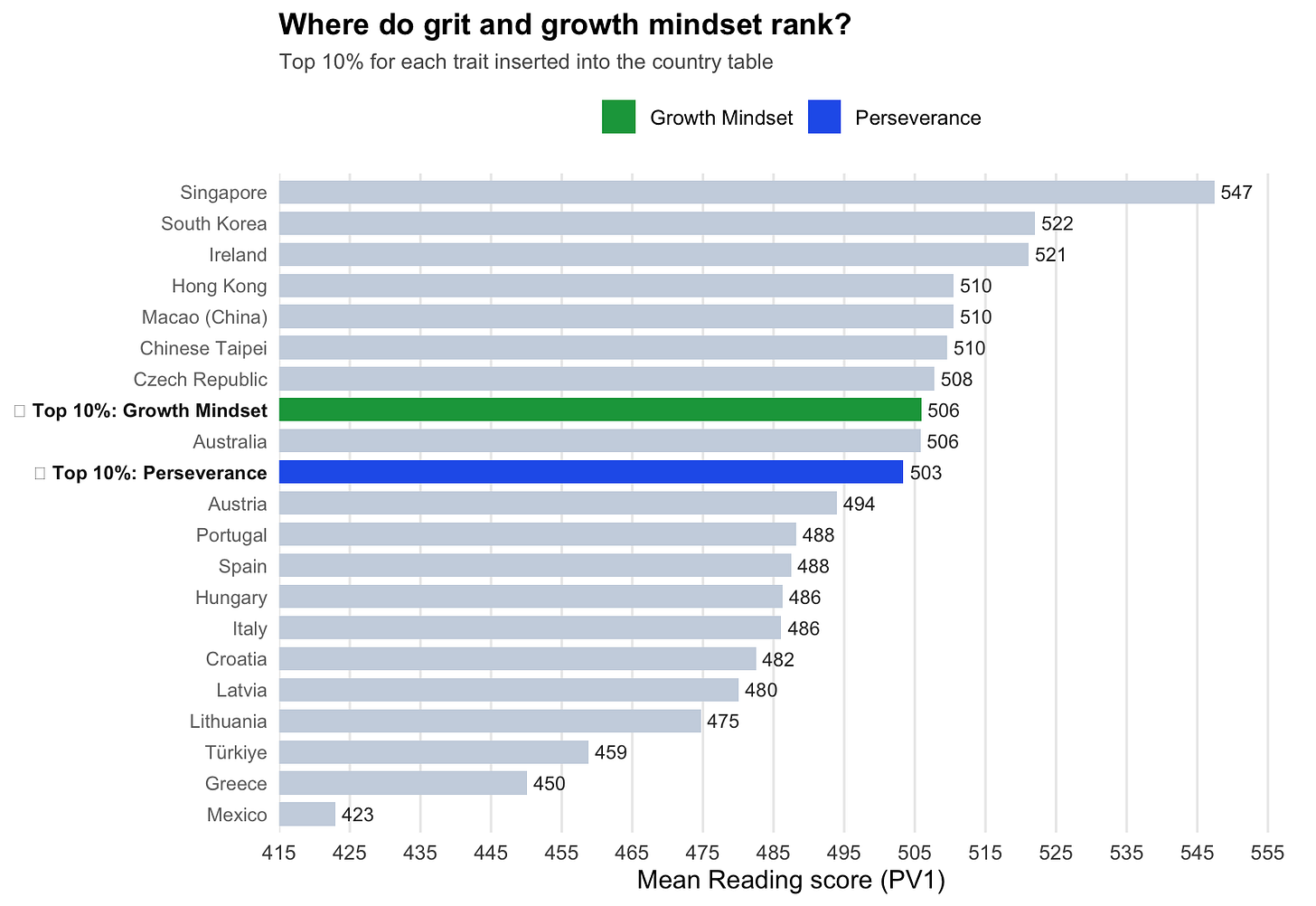

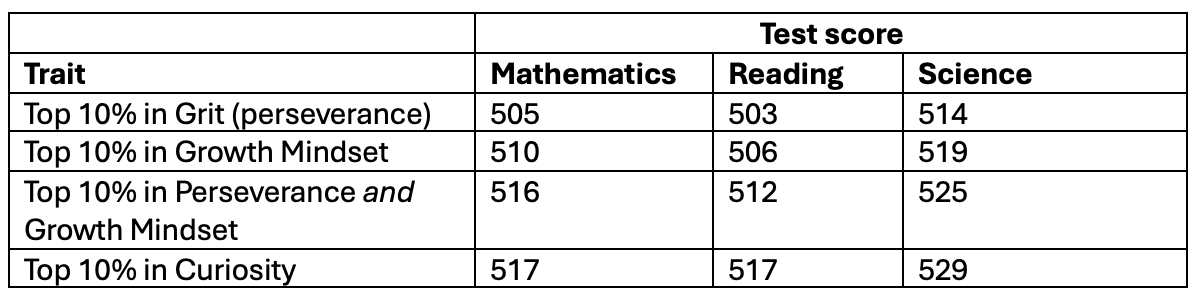

Let’s take the students who score in the top 10% for perseverance and compare them with those who score in the top 10% for growth mindset (obviously the same students might appear in both samples).

Then we see who gets the highest scores on mathematics, reading and science.

Time to cut to the results:

So, we have a clear winner.

It’s good to be gritty: students in the top 10% for perseverance outperform the average student in our sample by over 10 points across all three subjects.

That’s not bad going. According to PISA, 20 points equates to about a year’s worth of schooling.

Growth mindset does even better, though, adding between 14 and 17 points on top of the baseline across all subjects.

A thought experiment: Let’s imagine that those in the top 10% of each trait –grit and growth mindset – were countries. Where would they fall in our list of 19?

They’re both doing well, but growth mindset edges it.

So, we’ve solved the conundrum. Who has the Education Secretary’s number? Someone WhatsApp her the graph and let’s dust down the growth mindset posters.

Not so fast!

There’s another concept we haven’t talked about yet (in this post, at least): curiosity.

The PISA test asks students how curious they are, too.

What if we took kids in the top 10% for curiosity? How would they compare?

Getting curious about which trait matters most

First, a word about curiosity.

Neuroscientists define it as the intrinsic motivation to learn.[4] It’s about wanting to do well rather than simply acing a test or doing it to get rewards (or avoid punishments).

Who’s curious, according to PISA? People who strongly agree with the following:

· I am curious about many different things.

· I like to ask questions.

· I like learning new things.

And disagree with these:

· I get frustrated when I have to learn the details of a topic.

· I find learning new things to be boring.

As a gritty, persevering, growth-minded curiosity scholar, I have tried to trace the origins of these questions (the dark winter evenings just fly by in our household). Their genealogy, as Nietzsche might have put it, isn’t always that clear.

Still, people who agree ‘I am curious about many different things’ are probably more curious than people who don’t agree with it.[5]

So, enough preamble. How do those in the top 10% of the curiosity ratings compare to the gritty and growth mindset students?

The big reveal

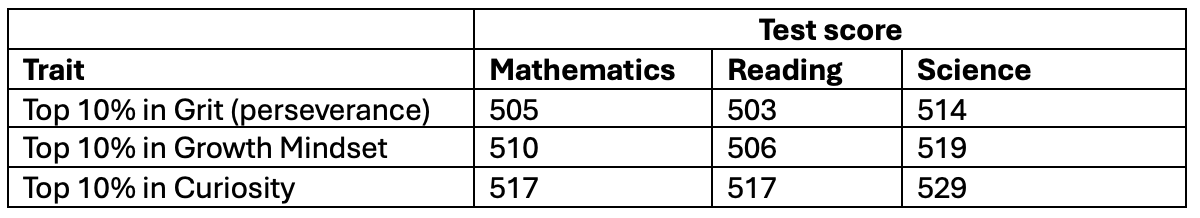

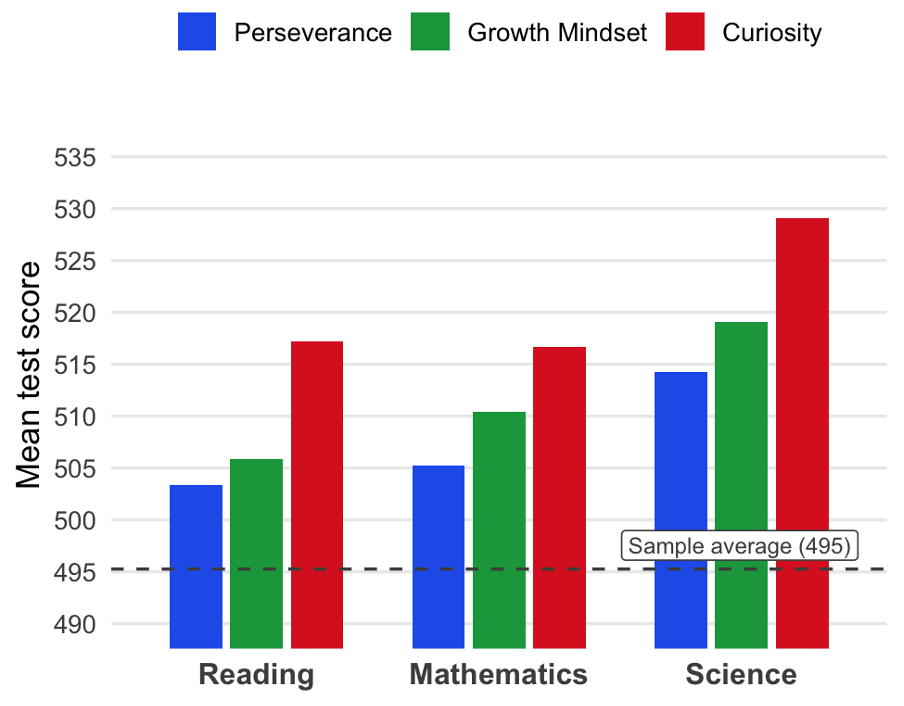

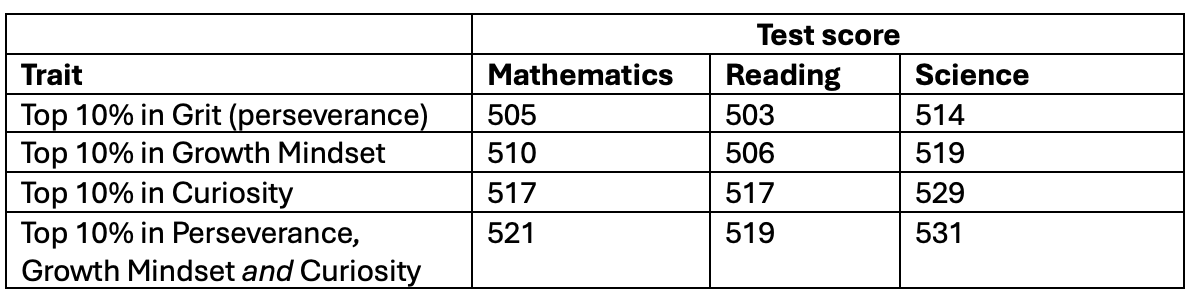

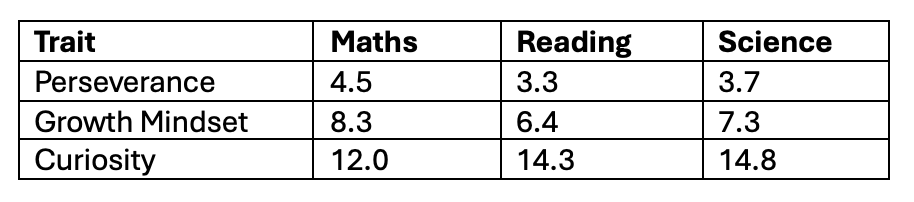

As before, we take the top 10% of students in grit, growth mindset and curiosity. We look at the average scores across the three tests. Then we put them in a table and…

Voilà.

And the same thing in the form of a helpful graph (note the dotted black representing the average for our 19 countries across all three tests and the fact we’ve started the y-axis at 490 points):

Again, we have a clear winner.

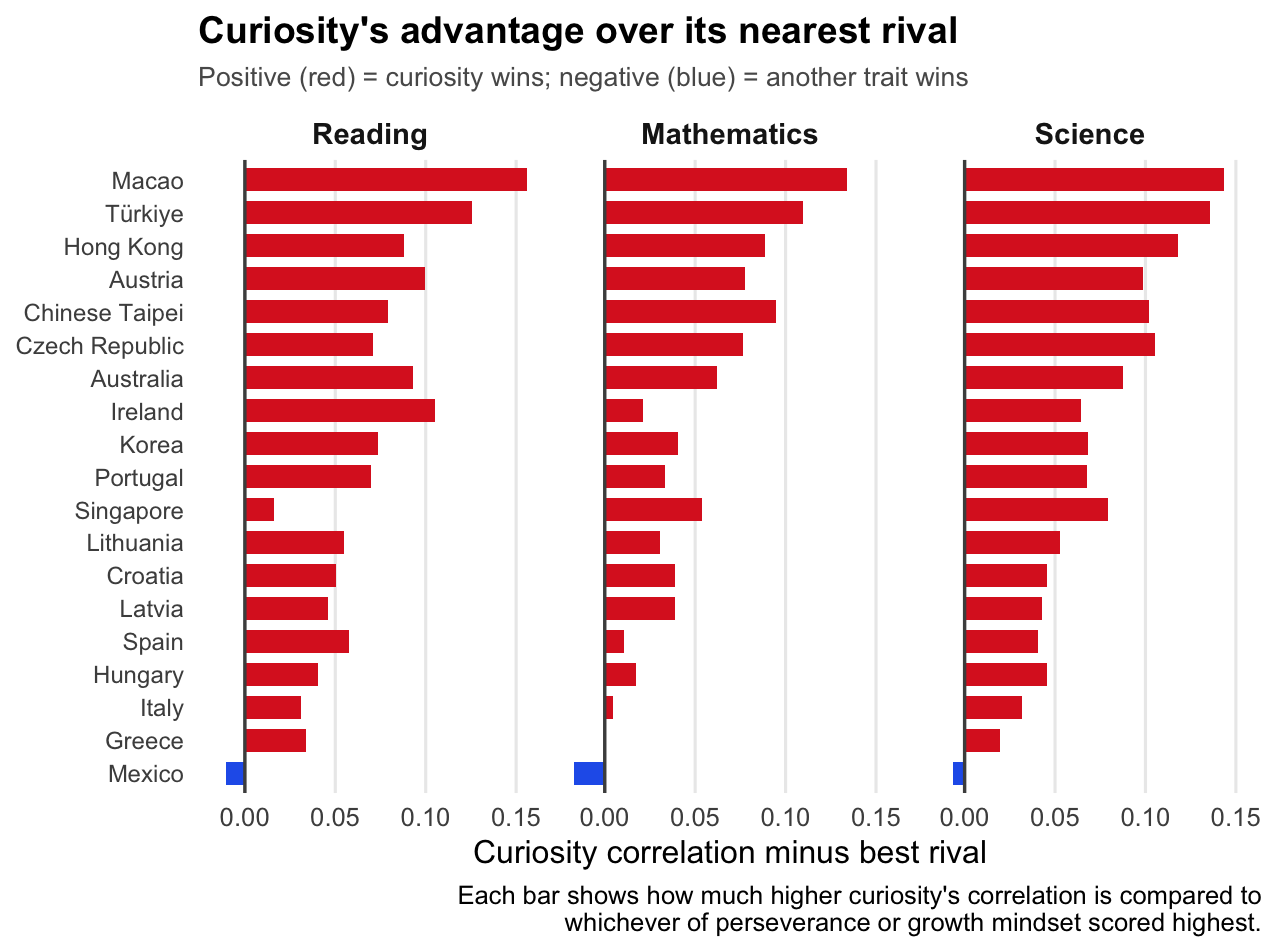

Not only that; the differences are sizeable. A few points in PISA can make a big difference. The seven points that separate curiosity from growth mindset – its nearest rival – in mathematics are meaningful.

Say you started off with Latvia on 483 points in 21st place. An extra 7 points would allow you to leapfrog Finland, Slovenia, Czech Republic, Australia, Austria, Poland, the UK, Denmark, and Belgium into 12th position.

In science, the difference is starker: if you could switch from being in the top 10% of grit to the top 10% of curiosity, you’d make almost a year of progress in an instant (ignoring all the caveats mentioned above about missing data and using only one of the ‘plausible value’ scores etc).

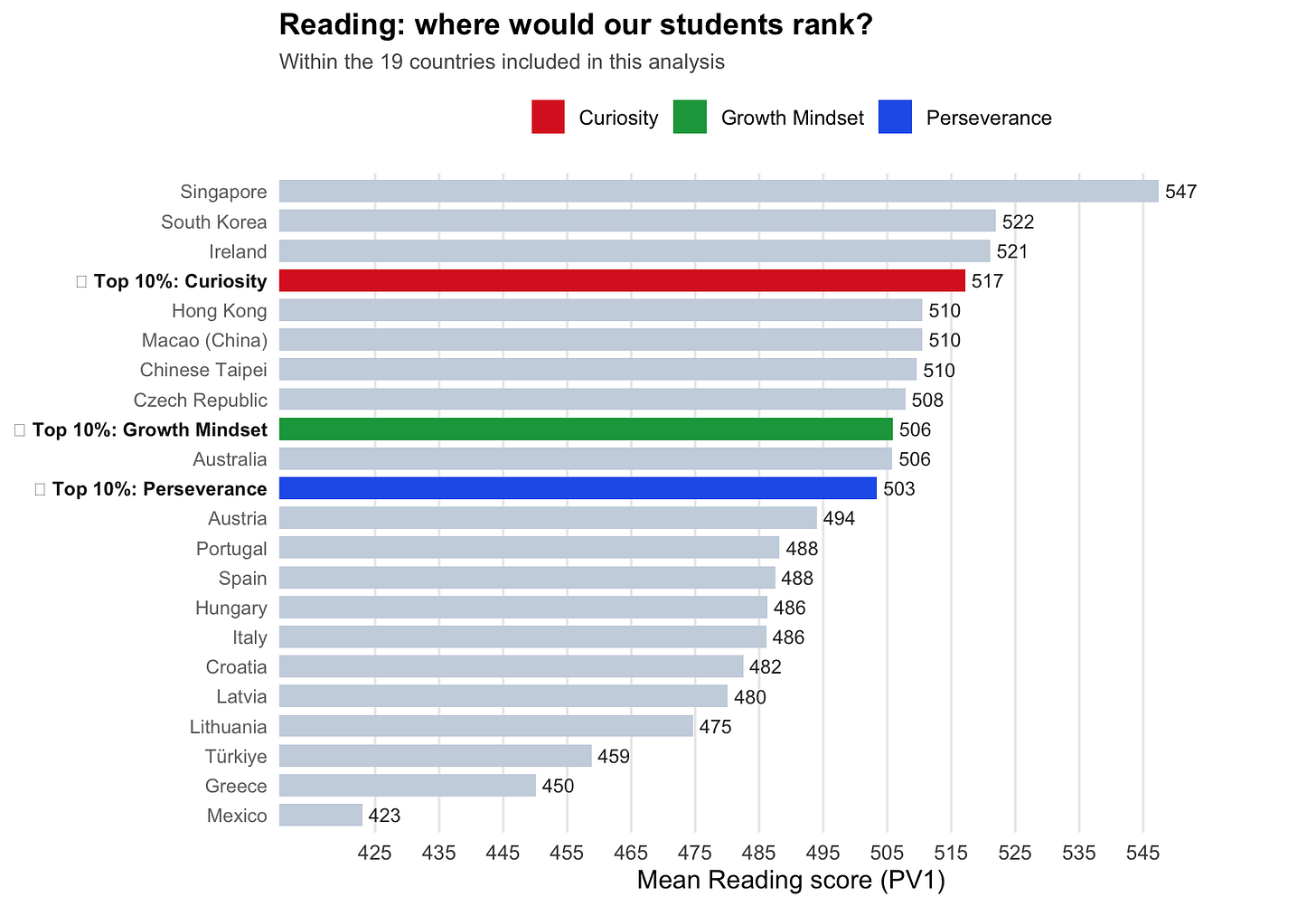

Insert curiosity into our ‘what if traits were countries’ table and the gap is clear:

Time for another thought experiment.

Let’s imagine that some schools went all-in on the educational interventions and promoted grit and growth mindset at the same time.

If we had a group of students who excelled in both areas, surely they would beat our curious students.

Right?

Flipping the table

Wrong.

Curiosity is no ordinary trait: it’s a learning superpower.

If we add the perseverance and growth mindset scores together and take the top 10% of students in both traits… these students still don’t reach the scores of the most curious students.

In fact, in reading, these gritty, growth mindset students are still the equivalent of three months behind their curious peers.

Let’s do a crazy thought experiment.

Let’s add together the perseverance, growth mindset and curiosity scores for all students. Take the top 10% again and compare them to the ones who are in the top 10% simply ranking by curiosity.

The difference?

This time, the triple-combo takes the crown, but there isn’t much in it. The difference is smaller than the gap between curiosity and either of its competitors.

Don’t worry about grit and growth mindset; you’d be as well simply concentrating on getting into the curiosity elite.

Time to put 145,000 students into a line. And then another line…

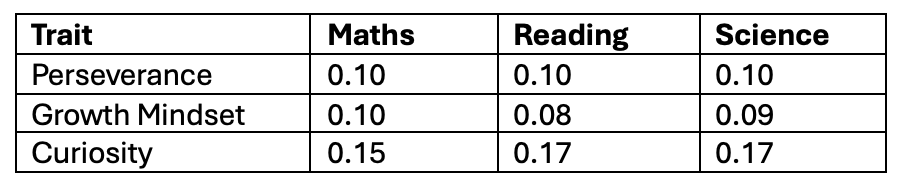

The top-10% comparison is the most intuitive way to look at the data, but there are two more rigorous tests worth mentioning — and they tell the same story.

The first is a correlation test.[6]

Let’s take 10 students and line them up by curiosity scores, then test scores.

If they’re in the same order both times, their correlation scores would be 1 – a perfect correlation.

Let’s imagine they’re in completely the opposite order. The student top first would be bottom in the second case, and so on. This time, the correlation would be -1 – a perfect negative correlation.

No relation between the two? Correlation is 0 – no relationship.

For context, the correlation between height and weight in adults is around 0.5: most people in a sample who are heavier are also taller.

Here’s how our three traits score:

First thing to note – all correlations are positive. Higher traits = higher test scores.

Second thing – all the scores are pretty low.

As a rough guide, correlations below 0.2 are often considered small in social science research, but in the case of the PISA test data, this is to be expected. Test scores are complicated, affected by hundreds of factors – teaching quality, home environment, sleep, what someone posted about you on social media the morning of the test.

A single psychological trait isn’t going to explain everything.

But within that context, across all subjects curiosity’s correlations are consistently larger than the alternatives – up to double, in some cases.

This finding holds across our sample.

Curiosity has a stronger correlation with all three test scores than growth mindset in all 19 countries and beats perseverance in 17 of 19.

Wherever you look, the pattern holds: curiosity correlates most strongly with test scores.

Note that this doesn’t mean curiosity causes higher test results, merely that the two go together. A rigorous analysis would look at curiosity’s effects over the longer term. We can’t rule out the effect that test success causes higher curiosity (or, of course, that both are caused by another factor).

But what if curiosity is piggybacking on the other two?

Correlation testing tells us that when one thing goes up, so does the other.

But we can do a bit better than this.

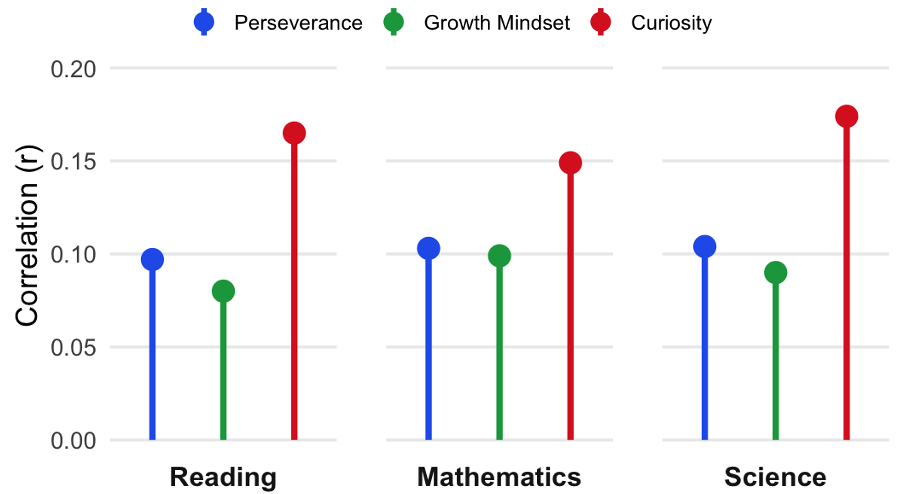

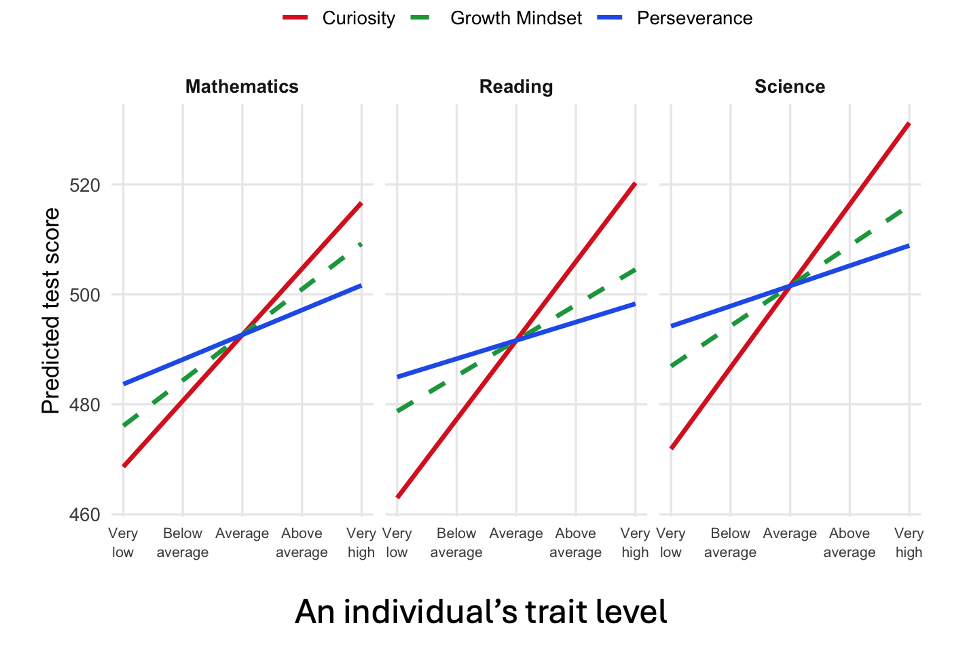

A regression model lets us ask a more specific question: given what we know about a student’s curiosity, how well can we predict their test score?

Even more importantly, it tackles another concern: what if curiosity only looks stronger because curious students also happen to be grittier or have growth mindset?

Well, we can look at how curiosity affects test scores while ‘controlling’ for perseverance and growth mindset. Effectively, we take all the students, imagine they have identical scores for perseverance and growth mindset and plot their curiosity against their score in each subject.

Then we do the same for growth mindset (keeping curiosity and perseverance scores constant) and for perseverance.

The steeper the slope, the more dramatic the effect, say, being more curious has on your mathematics score.[7]

In every case, we can see that curiosity – the red line – is the steepest.

The gradients (or ‘betas’) of the lines tell us how many extra points you get in each subject when your trait score – curiosity, perseverance or growth mindset – increases.

A student who moves from average to one standard deviation above (i.e., from the 50th percentile to the 84th), perseverance and growth mindset staying the same, would score 14 points higher in reading. This is equivalent to about 9 months of schooling, by PISA’s measure.

Conclusion? Get curious.

In comparison, going from average to one standard deviation above in growth mindset adds just over six points.

The same shift in perseverance adds only half that.

The three traits aren’t just measuring the same thing in different ways, curiosity is doing something distinct.

And when it comes to PISA test scores, it makes a real difference.

The upshot

None of this means perseverance and growth mindset are useless.

They’re not.

And none of these traits operate in isolation — you can be curious and give up easily, or incredibly gritty about the wrong things. The strongest performers were those in the top 10% on all three measures together, which shouldn’t surprise anyone.

But if you had to bet on one trait — if you were designing a school culture, or thinking about how to raise a child, or deciding where to put your energy — the data makes a fairly unambiguous suggestion.

But wait a minute. Are we really measuring curiosity or grit or growth mindset here?[8] For curiosity, it’s hard to say for sure without doing a battery of other reliable tests (which don’t really exist).

But we probably can say that the students who score highest on curiosity have the most positive attitude towards curiosity.

And, therefore, the students who most strongly think curiosity is a good thing are likely to do better on the PISA tests than those who strongly think perseverance or growth mindset are good things.

Curiosity isn’t just an add-on. It isn’t something to tag onto your education policy or school’s mission statement. It shouldn’t be an afterthought to grit or growth mindset.

Having curiosity might be the thing that matters most.

Which raises an awkward question for anyone who spent the last decade putting up growth mindset posters, or the last year giving assemblies about grit: why aren’t we talking about curiosity?

Acknowledgements

My extremely patient PhD supervisor Dr Richard Brock looked over the code and post beforehand, and made lots of very sensible suggestions about how to improve the method to make it more robust. My excuses for taking coding shortcuts at every stage appear in the footnotes below. Run the full thing - with weightings, all the PVs and an MLM and you’ll get almost the same values, honest.

Sorry Richard! All errors are, obviously, mine.

Endnotes and references:

[1] Carol Dweck (2006) Mindset: The New Psychology of Success

[2] Angela Duckworth (2016) Grit: The Power of Passion and Perseverance

[3] Also, using all the PVs is complicated, takes a long time and my computer gets very hot while it’s doing it. The analysis is unweighted, too. PISA’s complex sampling design ideally requires student sampling weights to correct for unequal probability of selection, which would slightly widen our confidence intervals but is unlikely to change the substantive findings.

[4] E.g. Baranes, A., Oudeyer, P.-Y., & Gottlieb, J. (2015). Eye movements reveal epistemic curiosity in human observers. Vision Research, 117, 81–90; Gruber, M. J., Gelman, B. D., & Ranganath, C. (2014). States of Curiosity Modulate Hippocampus-Dependent Learning via the Dopaminergic Circuit. Neuron, 84(2), 486–496; Van Lieshout, L. L., De Lange, F. P., & Cools, R. (2020). Why so curious? Quantifying mechanisms of information seeking. Current Opinion in Behavioral Sciences, 35, 112–117.

[5] See Note 7.

[6] Growth mindset scores were non-normally distributed, so we should use a different correlation test with these, but Spearman rank correlations tell a similar story.

[7] These are very simple linear models. One thing a fuller analysis would do is also control for socioeconomic background — wealthier students tend to score higher on tests and may also report higher curiosity, simply because they have more opportunities to pursue their interests. We haven’t done that here. It’s possible that some of curiosity’s apparent advantage reflects this overlap. That said, the consistency of the finding across 19 very different countries — from Mexico to Singapore, from Greece to South Korea — makes a pure wealth explanation harder to sustain. A more rigorous analysis would also use multilevel modelling (MLM), which accounts for the fact that students aren’t independent observations — they’re clustered in schools, which are clustered in countries, each with their own characteristics. Our simple regression treats all 145,000 students as if they were independent, which slightly overstates our confidence in the results. In practice, the direction and rough magnitude of the findings are unlikely to change, but the standard errors would be larger and the significance thresholds harder to reach. Think of it this way: knowing that two students from the same school both score highly tells you less than knowing the same about two students from different schools on opposite sides of the world — the MLM approach adjusts for this.

[8] The PISA questionnaire uses what’s called ‘self-report’ to measure traits. You can’t cheat on the test – or so the idea goes. You can tick strongly agree on all the curiosity or growth mindset questions, though. Are those who score highest on curiosity really the most curious students? We’ve got no way of knowing. Many people have tried other ways to measure curiosity; few have succeeded convincingly. It’s easier to make the case that we’re genuinely measuring grit or growth mindset on PISA than curiosity. Grit and growth mindset are both psychological constructs. They’re defined by getting a high score on a questionnaire. It’s a bit more debatable with curiosity. There are several competing ‘instruments’ for measuring curiosity (and PISA have created their own). There are even people (like me) who disagree that we should think of curiosity purely as a psychological construct – it’s a bit more complicated than that.